This piece is generative and contains sound. A short capture of the piece is shown below (enable sound from the video controls). You are encouraged to view the original on hic et nunc for the full experience.

In Process is a series that examines the inspiration, methods, and tools used by artists to create their work. Each episode focuses on a specific piece.

Find all episodes of In Process on the Ep. 0: Index page.

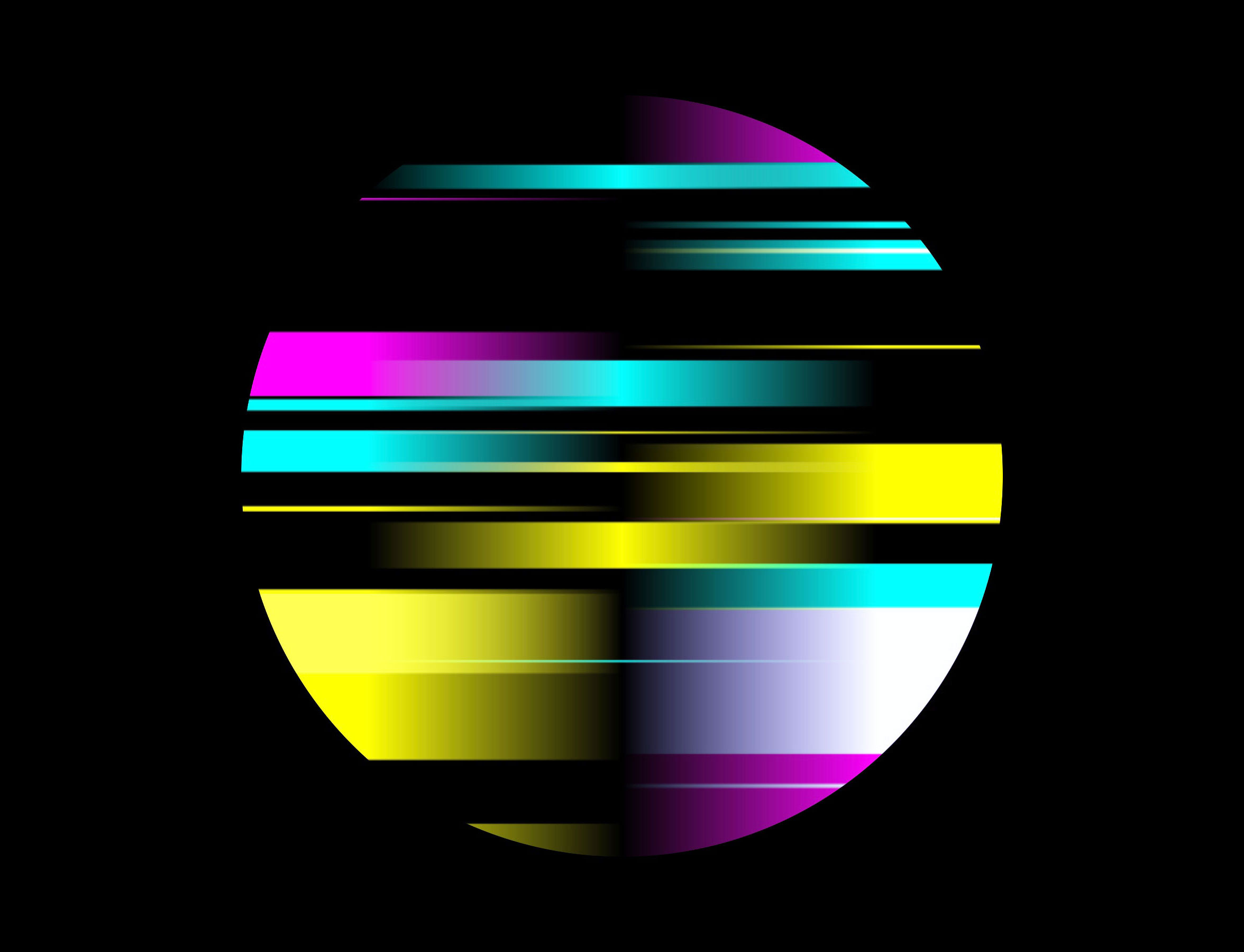

In this episode, we explore Rooms With No Views by Not James Murphy (pictured above).

This piece is both the first generative piece and the first piece that contains sound that I’ve covered on In Process and it’s a stunning example of the form.

One of the beauties of generative pieces is the subtle differences that occur with each viewing of the work. This piece really nails those subtleties.

It’s no wonder then that the piece is the culmination of 10+ years of experiments with music and code.

What was the inspiration for Rooms With No Views?

The genesis of this work was in 2012 when I wrote a piece of Javascript that allowed me to quickly remix simple musical compositions within a browser window.

At the time I was becoming jaded with traditional compositional methods as I’d been writing electronic music for over 10 years. This method allowed me to garner ideas for melody and arrangement for my own music.

Ultimately, however, I became more interested in this method of composition as its own form of musical expression, rather than using it as a mere compositional tool.

This simple piece of Javascript from way back then has evolved into the recent series you hear today collectively called “Music For Liminal Spaces”, a play on Brian Eno’s “Music For Airports”.

What I would come to learn is that this practice is actually an established musical field called Aleatoric music.

I became fascinated with it and the effect it had on my listening experience. Suddenly I felt very aware of the present moment, in a perpetual anticipatory state that seemed to heighten the experience of “Now”.

Clearly, I wasn’t the only person to make this connection, as I discovered the Clock Of The Long Now, in part designed by no other than Brian Eno himself.

This entire concept sat on a hard drive for almost a decade, but upon discovering hic et nunc and seeing that I could mint browser-based art pieces I finally thought there may be a home for the work.

After experimenting with a few different projects, I finally settled on my aleatoric music work and set about reworking my initial code.

As I began this new series I wanted to expand on the basic concept in the hope of yielding more conceptual inspiration.

One tangent I went on was exploring what I consider to be a particularly interesting aspect of this form of music; the experience of familiarity in spite of the unique arrangement of the composition you hear each time it’s played.

This led me to the field of liminal spaces, something very much still in the current zeitgeist.

Often liminal spaces are described as spaces that are familiar to the point of mundanity and yet are somehow unnerving, or strangely intriguing, perhaps due to their lack of human presence.

The more I delved into the concept of liminality in general, the more it seemed to fit perfectly with my work.

The aforementioned idea of the stretching of the present moment could be described as the liminality of time; how something can be transitory and stagnant seemingly at the same time.

The method of composition could be a liminal act, not composed but also not completely random, somewhere between order and chaos.

The visuals are also an homage to liminality, specifically the terrestrial TV test card which would often appear when that evening’s schedule had ended and would remain until the next morning, again evoking this feeling of being in between states. Not on, but also not off.

My more long term overarching influences for these works are Vangelis, Tangerine Dream, Philip Glass and Arvo Pärt, but each new piece usually comes from listening to a particular artist that day.

Some direct influences that I can recall throughout the series include Autechre, Aphex Twin, Pye Corner Audio, Ryuichi Sakamoto, Caterina Barbieri and Alessandro Cortini.

See more works from the series on Not James Murphy’s hic et nunc profile →

Describe the technical process of the piece. What medium and tools were most important to creating it?

Compositions all begin with my 2015 Macbook Pro running Propellerhead’s Reason and my old, but trusty Edirol PCR300. They are initially composed like a tradition piece of music.

Once I reach a stage where I feel the elements are all working well together (usually comprised of 4 to 6 layers of instrumentation) I break up the MIDI (musical data) into individual notes and each one is exported as its own .mp3 sample.

These samples become the basis of each piece.

I’ll then listen back to the piece multiple times through the code.

This stage reveals a lot about the relationship between the individual elements.

From there I can go back and forth between the Reason file and code tweaking samples until I’m happy.

After that, I’ll experiment with different parameters within the code. It has been written so that I can easily change the rate of notation, the amount of note variation and so on. This way I can control the chaos to some degree to achieve a general sense of harmony and balance to the piece, ideally creating something that people will want to listen to again and again.

As for the visuals… they aren’t actually connected with the audio. I used to do this with an earlier version but I found it a bit predictable, like a bad version of Windows Media Player visualiser! 😂

Instead, the visuals are left aleatory, meaning that they are randomised for the most part and any perceived connection between them and the sound is only in the mind of the viewer/listener.

Where is the best place to learn more about your work?

You can find more of my work on hic et nunc or follow me on Twitter for updates about new pieces.